PIFall: A Pressure Insole-Based Fall Detection System for the Elderly Using ResNet3D

Wei Guo, Xiaoyang Liu, Chenghong Lu, Lei Jing

Electronics, 2024

Recognizing Complex Activities by Combining Sequences of Basic Motions

Chenghong Lu, Wu-Chun Hsu, Lei Jing

Electronics, 2024

Human Activity Recognition via Wi-Fi and Inertial Sensors with Machine Learning

Wei Guo, Shunsei Yamagishi, Lei Jing

IEEE Access, 2024

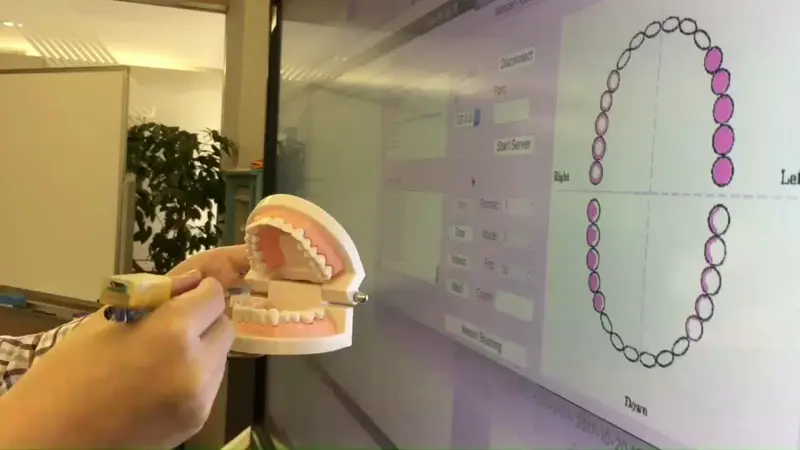

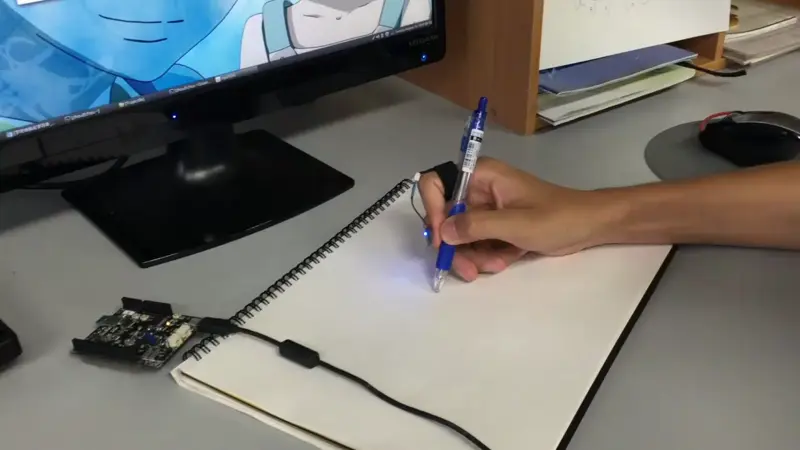

A Lightweight Method to Detect the Insufficient Brushing Regions Using a Six-Axis Inertial Sensor

Leijing

2017 IEEE 6th Global Conference on Consumer Electronics (GCCE 2017)

Magic Ring: A Self – contained Gesture Input Device on Finger

Lei Jing,Zixue Cheng,Yinghui Zhou,Junbo Wang,Tongjun Huang

Conference: Proceedings of the 12th International Conference on Mobile and Ubiquitous Multimedia

Welcome to

UoA iSensing Lab!

We envision a future where highly personalized services are seamlessly integrated in daily activities. To achieve this vision, we:

- Develop sensing technologies to digitalize the human motions and perceptions

- Design intelligent fusion methods for multi-sensory data

- Create proof-of-concept systems as cornerstones for the further practical applications

We are actively seeking students at all levels. If you are interested in joining us, please read the “Application” page first.

team

Faculty

| Lei Jing | 荊 雷 | 教授 | Professor |

Special Researcher

| Daisuke MIYATA | ||

| Chenghong LU | 盧 成鸿 |

Secretary

| Michiko Hoshi | 星美智子 | Secretary |

Students

| Wei GUO | 郭 葳 | D3 |

| Zeping YU | 于 泽平 | D2 |

| Kazuma SATO | 佐藤 一摩 | M2 |

| Shunsuke SUZUKI | 鈴木 竣介 | M1 |

| Geng Tiantian | 耿 甜甜 | M1 |

| Ye Li | 李 燁 | M1 |

| Zhang Yuren | 張 馭仁 | M1 |

| Pu Zhongnan | 蒲 中南 | M1 |

| Zhang Yunhao | 張 雲豪 | M1 |

| Charles Chisom Prince | M1 | |

| Ryoma SAKASAI | 逆井 竜馬 | S4 |

| Tsubasa IWAMOTO | 岩本 翼 | S4 |

| Taise YAKUSHINJI | 薬真寺 泰生 | S4 |

| Atsuya WATANABE | 渡邉 温也 | S4 |

| Shun KUGIMIYA | 釘宮 舜 | S4 |

| Ryoma HASHIMOTO | 橋本 凌真 | S4 |

| Hyakuda Issei | 百田 一星 | S3 |

| Rossi Andy Takuya | ロッシ アンディ拓也 | S3 |

| Ichikawa Taiki | 市川 大輝 | S3 |

| Outake Kenshin | 大竹 健心 | S3 |

| Zhang Youchen | 張 友辰 | S1 |

LAB NEWS

Description

We are looking for highly motivated students with interest in electronics engineering, signal processing, machine learning, and cyber-physical systems. The ideal candidate will be enrolled at PhD level in the Computer Science and Engineering Department at The University of Aizu. The student will be supervised by Dr.Lei JING and will join Human Intelligent Sensing Lab, based at The University of Aizu, Aizu-Wakamatsu, Japan.

Application

Applications should include a CV and supporting documents. These should be sent electronically to jing_lab at u-aizu.ac.jp. Then we will arrange an online interview for the candidate applicant.

Contact us

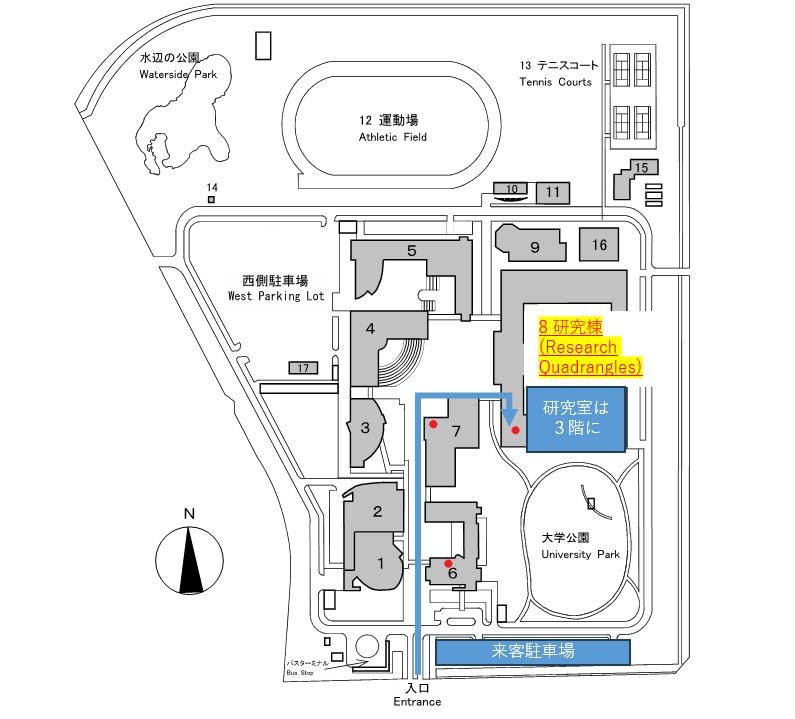

UoA Human Intelligent Sensing Lab

Research Quadrangles 343 (Faculty), 344 (Student)

the University of Aizu

〒965-0006

Fukushima, Aizuwakamatsu, Itsukimachi Oaza Tsuruga, Kamiiawase−90

Email:jing_lab at u-aizu.ac.jp